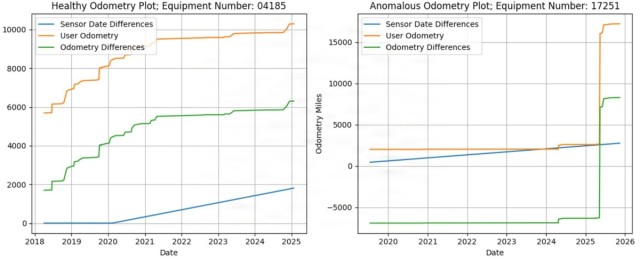

In an era where billion-dollar decisions are increasingly driven by data, the Army faces a critical challenge: data integrity. An analysis of 4,097 unique Caterpillar D7 Dozer records, containing 196,516 observations, revealed that over 82% of the observations were useless; roughly 62% of all Dozers had obvious anomalies such as negative odometry changes. When nearly two-thirds of vehicles report impossible odometer values, how can we trust that the complex data underpinning our readiness, business, and modernization efforts is any more reliable? The stakes are high, and the need for accurate data has never been more urgent.

Introduction

The Army of the future aims to harness technological advancements in artificial intelligence (AI) and analytics. To realize this vision, the Army has invested billions of dollars into platforms that drive us toward this end state. However, as the adage goes, “Garbage in, garbage out.” The center of gravity for analytics and AI is not the technical capabilities of the models, but the quality of data on which those models are trained. While the Army generates massive amounts of data daily, the incentive structures governing data reporting often lead to the neglect of data quality, ultimately creating flawed analysis. Recent efforts to consolidate business and readiness data into the Vantage platform are a step in the right direction but fail to address the underlying issue. Without a concerted effort to improve data quality, the critical and expensive AI systems that Soldiers rely on will risk producing misleading or hazardous outputs.

Even as sensors automate more of the data collection process, the Army continues to rely on mass amounts of manual data entry. This links poor data quality to many decisions, resulting in inaccurate or incomplete entries. Inaccurate data entry does not stem from apathy or malice, but from a misaligned incentive structure that fails to reward good data. High operational tempo, competing requirements, and a cultural acceptance of checking the box foster inaccurate reporting throughout the Army. The Army’s approach of enforcing reporting requirements increases compliance, not accuracy. Three key factors define the incentive structure that drives each data entry task: direct benefits, direct costs, and organizational pressures.

- Direct Benefits. Does the data collection bring value to me, simplify my job, or improve my mission outcomes?

- Direct Costs. How much time and energy does accurate data require? Are those resources better spent on higher priorities?

- Organizational Pressures. What policies and regulations apply? Are there repercussions for reporting deficiencies?

With these principles, we examine how analytic efforts have been stunted by poor data and the steps the Army must take to address the underlying issues.

Vignette: Maintainer Utilization

Manpower management is critical for sustaining long-term fleet health. Under Army Regulation (AR) 570-4, Manpower Management, maintenance personnel are required to record their work in Global Combat Support System-Army (GCSS-Army). Commanders use these metrics, expressed as a utilization rate, to assess readiness. Under AR 750-1, Army Materiel Maintenance Policy, the standard for utilization is 50% for military and 85% for civilian personnel. Commanders are assessed on utilization rate quarterly, introducing incentives that can distort data quality.

Utilization rates consist of direct and indirect hours. Direct labor toward maintenance tasks is recorded daily or on completion of a work order, while indirect hours, such as leave, parts delivery, or training, are recorded as needed. This information is recorded by maintainers often juggling other priorities. In a typical month of data, over 50% of entries report 0.1 or 0.2 hours; claiming that roughly half of all maintenance tasks took less than 12 minutes. The spike in low values and the quantity of outliers cast doubt on the validity of the data. It is difficult to distinguish which entries accurately reflect short tasks and which are just dummy values.

The technical manual for each piece of equipment contains a maintenance allocation chart (MAC), which provides the doctrinal ground truth for expected task durations. Comparing GCSS-Army reported hours against MAC standards reveals consistent underreporting. Most direct-hour entries fall under one hour, even for tasks that require several hours in the MAC. The frequent under-reporting likely reflects Soldiers entering arbitrary values to satisfy daily reporting requirements.

While there is no ability to visualize utilization data natively in GCSS-Army, commanders can use the U.S. Army Combined Arms Support Command sustainment enterprise analytic manpower reporting tool for utilization summaries. When aggregated, the low-frequency, high-value outliers balance out the numerous near-zero entries, obfuscating quality issues. Despite appearing usable, the data is incoherent and unviable for analysis.

Incentive Analysis

In this vignette, all three aspects of incentive — direct benefits, direct costs, and organizational pressures — contribute to poor data quality in GCSS-Army man-hour reporting.

Direct Benefits

For the Soldier entering the data, the value is minimal. Individuals inputting hours are rarely using the data for decision making. Even if units wanted to leverage the information, GCSS-Army lacks native visualization tools, making it a challenge to use the data for planning or personnel allocation.

Direct Costs

Accurate data entry is time consuming and tedious. Freeform manual entry, divorced from any alignment to the MAC, leaves significant room for error. Soldiers must invest effort upfront in tracking and reporting their work to produce precise entries, often without clear guidance or immediate benefit. The task discourages thorough reporting, particularly under high operational tempo.

Organizational Pressures

Soldiers face strong external incentives to meet reporting requirements. Many units emphasize high utilization rates, sometimes pushing for 100% compliance regardless of actual workload. The real or perceived risk of underreporting often outweighs any benefit of accuracy.

Taken together, these factors create a feedback loop that perpetuates poor-quality data. Soldiers have little incentive to be precise; the process is cumbersome; and leadership pressure prioritizes appearance over accuracy. Even when aggregate data appears reasonable, the underlying entries are often unreliable, undermining analytics and decision making. Addressing this issue requires realigning incentives so that accurate reporting carries tangible benefits and is reinforced by effective systems and leadership practices.

Analytic Limitations

The Army aspires to harness maintenance data for AI-enabled, predictive readiness. In theory, decades of maintenance and usage records should make this attainable. In practice, the data required for these forecasts is deficient. As noted earlier, an analysis of thousands of Caterpillar D7 Dozer records found that over 82% contained anomalous mileage histories. Similar quality issues can be found across ground-vehicle data. For example, systems of record show that over 40% of the typical unit’s dispatches are overdue, deviating heavily from the ground truth. Whether they are utilization rates, odometry data, dispatches, or some other metric, missing and erroneous entries produce inconsistent baselines and prohibit effective analysis.

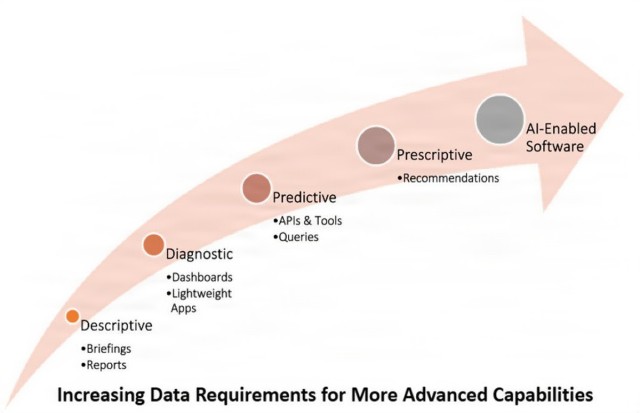

Any organization that relies on manually entered data must handle quality issues. The industry-standard approach is to rely only on validated entries, but the sheer volume of erroneous records in Army maintenance systems limits the effectiveness of this solution. What remains may be adequate for descriptive reporting or one-off analytic efforts, but it cannot sustain organization-wide predictive or prescriptive capabilities. More advanced AI and machine learning (ML) capabilities have greater demands for data quantity and quality. Regardless of the algorithms we use, state-of-the-art ML techniques suffer substantial drops in accuracy and reliability when exposed to inconsistent or incomplete training data. Until incentives are aligned to address data quality issues at the source, the Army’s analytic ambitions will rest on unreliable foundations.

Solutions

High-quality data emerges when the user gains clear value from entering it. The burden of reporting is reduced, and organizational pressures reward honesty rather than punish it. Any enduring solution must start by designing systems that support these conditions.

Increase Direct Soldier Value

Data quality improves when Soldiers see that accurate inputs directly benefit their mission, schedule, or workload. Systems should provide actionable insights to the lowest echelon, like maintainers, platoon sergeants, and staff sections, rather than only feeding enterprise-level dashboards. When users can visualize their data, track their own progress, or receive clear operational benefits, accuracy becomes self-reinforcing. When data helps the user, the user helps the data.

Reduce Costs, Friction, and Administrative Burden

Manual reporting often competes with operational demands. To improve quality, systems must shrink the time, effort, and cognitive load associated with accurate data entry. Modern interfaces, streamlined workflows, autofill, and interoperable systems reduce duplicated work and minimize the incentive to check the box in the fastest way possible. Automation should capture routine information so Soldiers only report data that must be collected manually.

Align Organizational Pressures with Honest Reporting

Reporting often rewards volume, timeliness, or high percentages rather than truth. Policies should reward realistic and accurate reporting rather than inflated performance metrics. Data requirements should be purposeful, not excessive, and aligned with lethality, readiness, and quality-of-life outcomes. By combining value-first design with smooth, scalable processes and honesty-driven organizational incentives, we can revitalize the Army’s data ecosystem and transform data entry from a burden to a mission enabler.

Promote Scalable, Modular, Soldier-Centered Tools.

Not every need can, or should, be met with monolithic systems. For Soldier needs to drive development, the Army should prioritize the development of smaller, scalable, purpose-built applications. Solutions developed by unit-innovation teams or industry partners should be integrated through open standards with traditional systems of record. The result is a more adaptive and resilient data ecosystem that evolves with the needs of the force and encourages innovation without sacrificing enterprise integrity.

Framework for Success: AMAP

The Aviation Maintenance Analytics Platform (AMAP) demonstrates that building with incentives in mind naturally creates healthier data. AMAP is an experimental platform that gives users the ability to easily manage the requirements of the Aviation Training Maintenance Program (AMTP). The system provides direct value by allowing users to track and visualize trends in maintainer data. It reduces the cost of accurate reporting by streamlining a tedious process. Finally, it complements organizational pressures by filling established reporting requirements and giving commanders oversight into their data quality. The healthy data created through AMAP enables meaningful analysis into maintainer training.

AMAP was built in an environment where AMTP was entirely paper based. Tracking tasks, evaluations, and progression was impossible to maintain at scale. At best, packets were updated weekly for compliance. The priority was fixing aircraft on the hangar floor, not flipping through Department of the Army forms. Any data that was collected was not visible at the enterprise level. Soldiers and managers gained no meaningful benefit from accurately tracking maintainer progression, and commanders had no way to see where skill gaps might threaten readiness. Aviation had a training program, but no usable data to drive it. AMAP sought to tackle this problem through a user-centric design approach that addressed each aspect of incentive.

Direct Benefits

AMAP delivered immediate and obvious value to the user by transforming a reporting requirement into a quick and easy system that brought the data out of the binder and into the hands of users. This data is consolidated and displayed in intuitive dashboards, allowing maintainers to track progress, manage evaluations, and get deadline notifications without disrupting their workflow.

Direct Costs

AMAP dramatically reduced the cost of accurate reporting. Before AMAP, recording task completion depended on lulls in operational tempo, and week-long audits were often needed to retroactively clean data. Manually filling forms required entering the same redundant information and introduced risk for human error. AMAP removed the friction by digitally logging at the point of action and synching with the relevant systems. Clean interfaces, autofill, and validation rules made errors less likely and more obvious.

Organizational Pressures

AMAP aligned with organizational incentives by streamlining an existing administrative requirement while strengthening buy-in across the chain of command. Commanders could create and update unit critical task lists that synced directly with maintainers’ individual critical task lists, giving Soldiers a clear view of their annual requirements. Supervisors could access real-time dashboards instead of manually compiling spreadsheets. The reduced workload and improved accuracy give everyone from junior maintainers to battalion staff officers a tangible incentive to keep AMTP data current.

By designing for the lowest level, automating away friction points, and creating shared value across the chain of command, AMAP cultivates engaged users and transforms maintainer progression data from a chore into a useful asset.

Analytic Potential

Beyond the benefit to the user, improved data quality enables the Army goal of higher-level predictive modeling and decision making. AMAP has been collecting data on maintainer progress and performance since 2023. With this dataset, the AMAP team developed a model to predict maintainers’ capability, demonstrating that maintainer progression follows consistent, quantifiable patterns. An initial analysis of this model shows that important features like days in service, unique fault exposure, and average man-hours were the strongest predictors of capability. Because AMAP data is clean, structured, and reliable, it supports transparent and explainable analytics and provides a strong foundation for increasingly robust models over time.

AMAP’s success offers a blueprint for scaling data-driven initiatives across the Army: fix incentives, not just requirements, and quality data follow. Although an experimental product, AMAP has been adopted by units across the aviation community and is an accepted digital alternative for storing paper records.

Conclusion

Leveraging AI and achieving data-driven decision making will ultimately depend on the accuracy of the underlying data, not on the sophistication of our algorithms or computational power. To make lasting changes in data quality, we must address the incentive structure of the people who generate that data. When Soldiers gain no value from entering accurate data and leadership rewards compliance over honesty, errors are inevitable. The maintainer utilization and odometer mileage examples illustrate how misaligned incentives undermine analytics. The AMAP example proves that data quality improves naturally when systems deliver value to the user, reduce reporting burden, and align organizational pressures with ground truth. To realize the Army’s vision of AI-enabled readiness, we must design systems that make accuracy the easiest and most rewarding choice.

--------------------

CPT Gage Callahan serves as a data scientist at the Artificial Intelligence Integration Center in Pittsburgh, Pennsylvania. He previously served as a company executive officer for the 127th Airborne Engineer Battalion. He was commissioned as an engineer lieutenant and has transferred to FA49 Operations Research/Systems Analyst. He has a a Master of Information Systems Management degree from Carnegie Mellon University.

CPT Thomas Canchola serves as a data scientist at the Artificial Intelligence Integration Center in Pittsburgh, Pennsylvania. He previously served as the 1st Battalion, 1st Air Defense Artillery Regiment, S-6 officer in charge. He was commissioned as a signal officer and has transferred to FA49 Operations Research/Systems Analyst. He has a Master of Information Systems Management degree from Carnegie Mellon University and a Master of Science degree in economics from Purdue University.

CPT Timothy Naudet currently serves as a data scientist at the Artificial Intelligence Integration Center in Pittsburgh, Pennsylvania. He previously served as a platoon leader. His military education includes Ranger School, Reconnaissance and Surveillance Leaders Course, Mortar Leaders Course, Airborne School, and Air Assault School. He has a Bachelor of Science degree in chemistry from the U.S. Military Academy and a Master of Information Systems Management degree from Carnegie Mellon University.

CPT Olivia Beattie serves as a data analyst at the Artificial Intelligence Integration Center. She previously served as an operations officer, executive officer, and platoon leader for 2nd Regiment General Aviation Support Battalion. She was commissioned as an aviation lieutenant and earned her rating as a UH-60 Blackhawk Pilot. She has a Bachelor of Science degree in mechanical engineering from the U.S. Military Academy and a Master of Information Systems Management from Carnegie Mellon University.

CPT Bonvie Fosam serves as a data analyst at the Artificial Intelligence Integration Center. She previously served as the executive officer for Headquarters and Headquarters Company, 1st Signal Brigade. She also served as platoon leader of the 2nd Platoon of Alpha Company, 304th Expeditionary Signal Battalion–Enhanced. She was commissioned as a signal lieutenant. She has a Bachelor of Science degree from the U.S. Military Academy and a Master of Information Systems Management degree from Carnegie Mellon University.

CPT Adam Knapp serves as a data analyst at the Artificial Intelligence Integration Center. He previously served as the platoon leader of the 1st Platoon of 161st Engineer Support Company (A), 27th Engineer Battalion (A)(C). He was commissioned as an engineer lieutenant. He is a graduate of the Sapper Leaders Course. He has a Bachelor of Science degree from the U.S. Military Academy and a Master of Information Systems Management degree from Carnegie Mellon University.

--------------------

This article was published with the winter 2026 issue of Army Sustainment.

RELATED LINKS

The Current issue of Army Sustainment in pdf format

Current Army Sustainment Online Articles

----------------------------------------------------------------------------------------------

Social Sharing