ABERDEEN PROVING GROUND, Md. -- A group of Army scientists are working to map out the cognitive mechanisms of the human system to seamlessly integrate future military technology into the daily lives of Soldiers.

These scientists, from the U.S. Army Combat Capabilities Development Command's Army Research Laboratory, are not only trying to make life easier in the combat arena -- they are working to save lives.

One of these scientists, Dr. Chloe Callahan-Flintoft, is from the lab's Human Research and Engineering Directorate and is a contributing author and researcher of a study originally published in bioRxiv Aug. 31, 2018 (see related links below). Psychological Review is also reviewing the paper for publication.

Callahan-Flintoft received her bachelor of science in math and psychology at Trinity College Dublin. She then went on to get her master of science statistics at Baruch College - City University of New York.

She primarily based her work off of research accomplished with her graduate advisor at Pennsylvania State University while getting her doctorate in psychology. A National Science Foundation grant mostly funded the research.

Callahan-Flintoft now works with the Army through an Oak Ridge Associated Universities Fellowship; however, she is preparing to make the leap and join the lab's scientific research community full-time.

"I realized a lot of the problems I was interested in were highly applicable to tasks Soldiers face--such as how to strike a balance so that you are staying on task, like searching for a target, but not setting such rigid attentional filters that you don't see unexpected events," she said.

Her work focuses on visual attention and how the brain samples information in both space and time. Because the human brain can't process every visual input to the same extent, Callahan-Flintoft helped to create a conceptual framework to map out the cognitive mechanism.

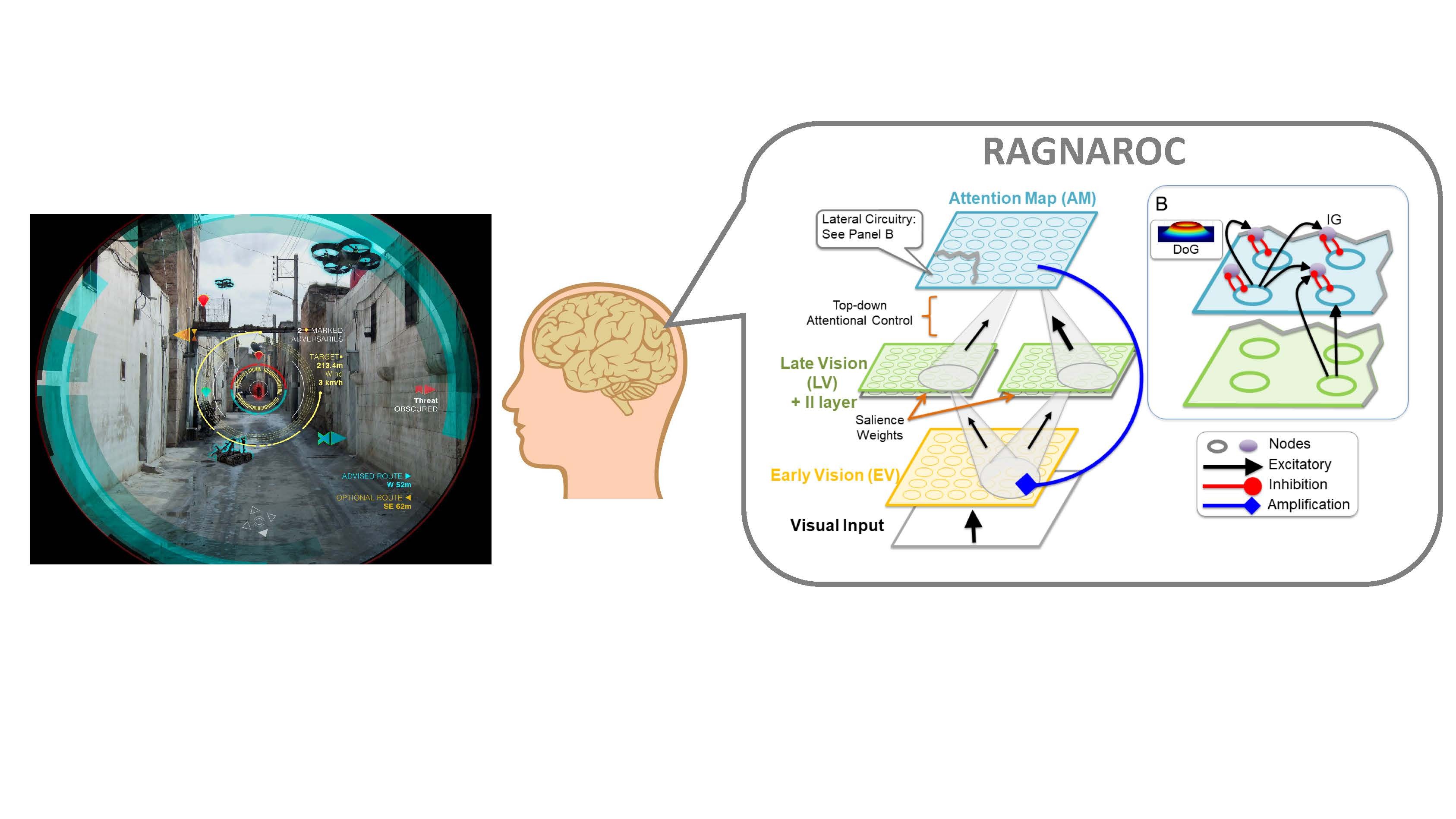

Her research is a model that can be used to generate predictions of how the brain will respond in explicit situations both in reaction time and accuracy. It maps out the way information is inputted and processed in stages: Visual Input to Early Vision to Late Vision to the Attention Map, where attentional resources are delegated.

"Our environments present the human visual system with an abundance of changing information," she said. "To meet processing constraints the brain must select and prioritize some pieces of information over others."

Her model is advancing research in the field of visual attention, she said.

"RAGNAROC bridges two large bodies of literature in the attention community under one theory of reflexive visual attention by being able to simulate both human behavior as well as electrophysiology results," Callahan-Flintoft said. "In doing so, the model is able to account for seemingly conflicting findings in previous work such as why sometimes our attention is pulled away from a target towards a salient distractor and other times we're able to suppress that salient distractor and stay on task."

Because this research simulates how items in the human visual field compete for attention, it was a natural step for Callahan-Flintoft to become interested in how such a system behaves when observed objects are changing smoothly in time.

Callahan-Flintoft then built the attentional drag model from the framework. This model's objective is to understand how human attention is engaged longer for smoothly changing objects versus abrupt movements. For example, an object appearing quickly from around a corner versus smoothly shifting facial expressions.

She is a contributing researcher and author on a paper titled, A Delay in Sampling Information from Temporally Autocorrelated Visual Stimuli. The paper covers her research on attentional drag and has been accepted by the publication Nature Communications.

These models are the foundation for future combat and wearable technology, she said.

"My work is aimed on the importance of understanding the human brain, its underlying cognitive mechanisms, and how to develop technology around the human operating system," Callahan-Flintoft said. "Our brains were built from functioning in the natural environment. It's integral to understand the organic machinery before applying the technical advances."

The quantifiable predictions produced from these models allow researchers to hypothesize and then test how the human visual system will respond to Augmented Target Recognition, or ATR, highlights displayed on Augmented Reality, or AR, eyewear.

"With the advent of AR, there is now new capabilities to display information to the Soldier overlaid on his/her visual field," Callahan-Flintoft said. "One such implementation is ATR highlights in which an AI system could encourage the visual attention of a user to potential threats. Ideally such highlights would provide helpful information to the Soldier without pulling the Soldier off task or causing a detriment to the Soldier's situational awareness."

Additionally, her work on the Human-AI Interactions for Intelligent Squad Weapons project, is advancing knowledge of the cognitive mechanisms involved in attentional allocation, scene processing and decision making in order to advise ATR implementations. Their objective is to make tech that complements rather than competes with natural visual processing.

Callahan-Flintoft envisions her work will lead to developments in AI systems that work in tandem with humans. Her goal is effortless human and tech integration, she said.

______________________________________________________________________

The CCDC Army Research Laboratory is an element of the U.S. Army Combat Capabilities Development Command. As the Army's corporate research laboratory, ARL discovers, innovates and transitions science and technology to ensure dominant strategic land power. Through collaboration across the command's core technical competencies, CCDC leads in the discovery, development and delivery of the technology-based capabilities required to make Soldiers more lethal to win our Nation's wars and come home safely. CCDC is a major subordinate command of the U.S. Army Futures Command.

Social Sharing