ADELPHI, Md. (Army News Service) -- Army researchers are developing new advances to enable autonomous robots to operate more like teammates and less like tools.

Key to reaching this goal of achieving an effective robot-Soldier team will be enabling the robot to better understand the Soldier's or the commander's intent, said Joseph Conroy, an electronics engineer with the Electronics for Sense and Control team at the Army Research Laboratory.

The current generation of unmanned aerial and ground vehicles employed by the Army require a human operator, but the job of interpreting the intelligence, surveillance and reconnaissance data is labor intensive, Conroy said, and that can result in a much longer than real-time analysis.

Current generations of vehicles also rely excessively on GPS connectivity for positioning, he said. Similarly, information acquisition, particularly video, relies on high bandwidth wireless communications.

MUM-T EXERCISE

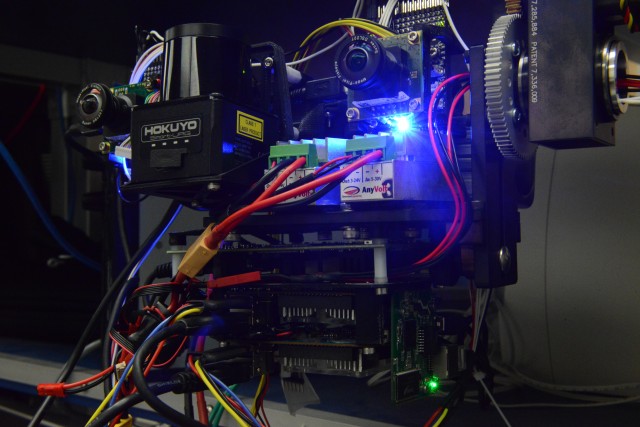

In late 2014, Army Research Laboratory personnel brought aerial robots to Fort Benning, Georgia to test with the infantry in a Manned/Unmanned Teaming (MUM-T) exercise sponsored by the Army Training and Doctrine Command. The robots were representative of current commercially-available capabilities and emerging capabilities developed through academic research.

The purpose of the exercise, Conroy said, was to determine how Soldiers could make use of autonomous systems in an operational setting. It confirmed that autonomous systems can be a battlefield asset, particularly maneuvering ahead of the Soldier, he said.

The robot could help a soldier by identifying disturbed ground, which is a sign of a buried improvised explosive device. It could also examine the interior of a building and look around a corner or over a berm.

There is a sweet spot of autonomy, Conroy noted, where the robot is advanced enough not to be a burden for a Soldier, but not so advanced that it exhibits what is generally thought of as artificial intelligence.

"We want to push the level of autonomy up just enough so that there's a specific suite of behaviors the robot can execute very efficiently and reliably based on the commander's intent, with as little guidance as possible," he said.

FUTURE GENERATION

Army researchers envision a greater degree of on-board perception and processing to enable a wider variety of mission scenarios, enhanced robustness, and real-time intelligence, Conroy said.

Furthermore, a greater degree of intelligence could allow vehicles to work with the Soldier rather than be operated by the Soldier. However, he cautioned that care must be taken that the vehicle performs as the Soldier or commander expects. An autonomous system must be able to infer its operator's intent and desires for its behavior.

Enhanced localization capabilities in GPS-denied environments or during periods without constant communication with a base station could allow for environmental awareness and intelligence gathering even during periods of radio frequency outages, he added.

Being able to perform intelligence, surveillance, and reconnaissance onboard the platform when communications or GPS goes down would be a huge advantage, Conroy said. The mission could still be completed and once communications are restored, the data could be dumped.

Writing the algorithms for such an intelligent military vehicle is even more challenging than designing a driverless car, according to Conroy, because military vehicles must be able to travel off-road in fog or brownout conditions with adversarial forces nearby and possible denial of wireless communications.

Once autonomous systems are capable of understanding their environment, rather than just relaying raw sensor information, typically in the form of video, to an operator, a wider range of mission support scenarios will be possible, Conroy predicted.

The autonomous systems would process the information about its environment onboard, using analysis and perception algorithms, before sending or saving the data, he said. The data would be greatly reduced in size from the original, thus freeing up bandwidth for other operations.

FOCUSING ON THE SOLDIER

Eventually, the Army researchers' efforts to enable efficient human-robot teaming may involve actually instrumenting the Soldiers themselves, said William Nothwang, team leader for the Electronics for Sensing Control team.

Nothwang said the lab is moving towards an effort called "Continuous, Multifaceted Soldier Characterization for Adaptive Technologies," which will focus on methods to assess and predict moment-to-moment changes in individual Soldier states under real-world conditions such as fatigue or stress.

"We design tools to the lowest capability to enable the maximum usage, meaning we leave a lot of capability, human capability, on the table," he said. "If we can understand a Soldier's current capability, and adapt the tool to that capability level, we can get a lot of that human capability back."

In other words, the robotic teammate would be capable not just of speech recognition, but also of understanding a Soldier's capability level and factoring that into its response to the current situation and its mission.

Sensors on the Soldiers like "next generation-Fitbits" might provide the robot with some of the required information, he added, but to be effective on the battlefield, whatever solution is employed for a Soldier-robot team must be scalable to many teams of Soldier-robot teams.

Such a solution must also be lightweight and small in size, given all other required items Soldiers must carry. Conroy admitted that "we've just scratched the surface," and it will take years, if not decades, for the technology to mature.

Key research initiatives to enable useful systems include the development of the following:

-- Algorithms to enable obstacle avoidance for near-ground point-to-point navigation.

-- Geolocation sensing and inference that can provide a position solution without GPS for up to days at a time.

-- Target recognition and tracking algorithms that can reliably extract signals from data corrupted by noise.

-- Enhanced methods of enabling on-board processing of data, including leveraging commercial graphics processing units and development of application specific integrated circuits.

-- Speech recognition and physiological instrumentation that works in a real-world, tactical environment.

Conroy is part of a much larger multidisciplinary group of scientists and engineers interested in hitting the right balance of autonomy in robotics.

The exercise also helped identify current technology gaps and what capabilities and research questions it would be most useful to focus on in near-term development.

Social Sharing