Army Test and Evaluation Command and Maneuver Center of Excellence experts identify five missteps in requirements development that can slow or halt a program in testing.

Poorly defined requirements that are not operationally linked and do not consider test implications can result in an unfavorable system evaluation, which can delay system fielding, increase testing and require system modifications.

For example: Russell is looking to buy a truck to take his family camper into the mountains. The operational need is a truck that can tow the family camper. Maximum speed and fuel efficiency are valid vehicle requirements; however, meeting those requirements will not guarantee that Russell can safely tow the camper into the mountains. Horsepower, towing capacity, vehicle braking and the presence of a tow hitch are better indicators of whether the selected truck will fill the need. An operational need and requirement are linked when failure to meet the requirement will definitively result in the system's inability to fill the need.

Requirements define the system design that is necessary to fill the identified operational need. Testers design tests to determine whether the current system design fills that operational need. If the requirements do not reflect what is necessary to fill the need, then testing can show that a system in fact does not fill the need, despite meeting system requirements. The camper example demonstrates why it is vital that those with a stake in the requirements ensure that they reflect the needs of operational units, to avoid halting system development and fielding.

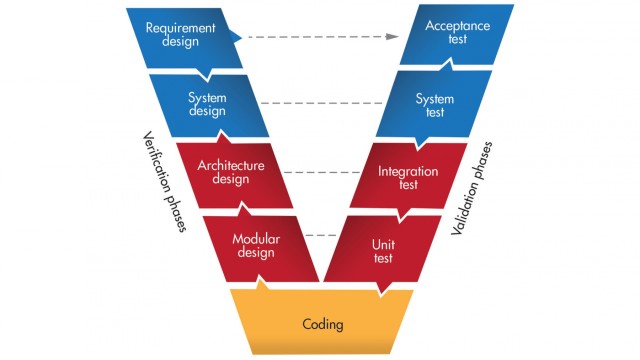

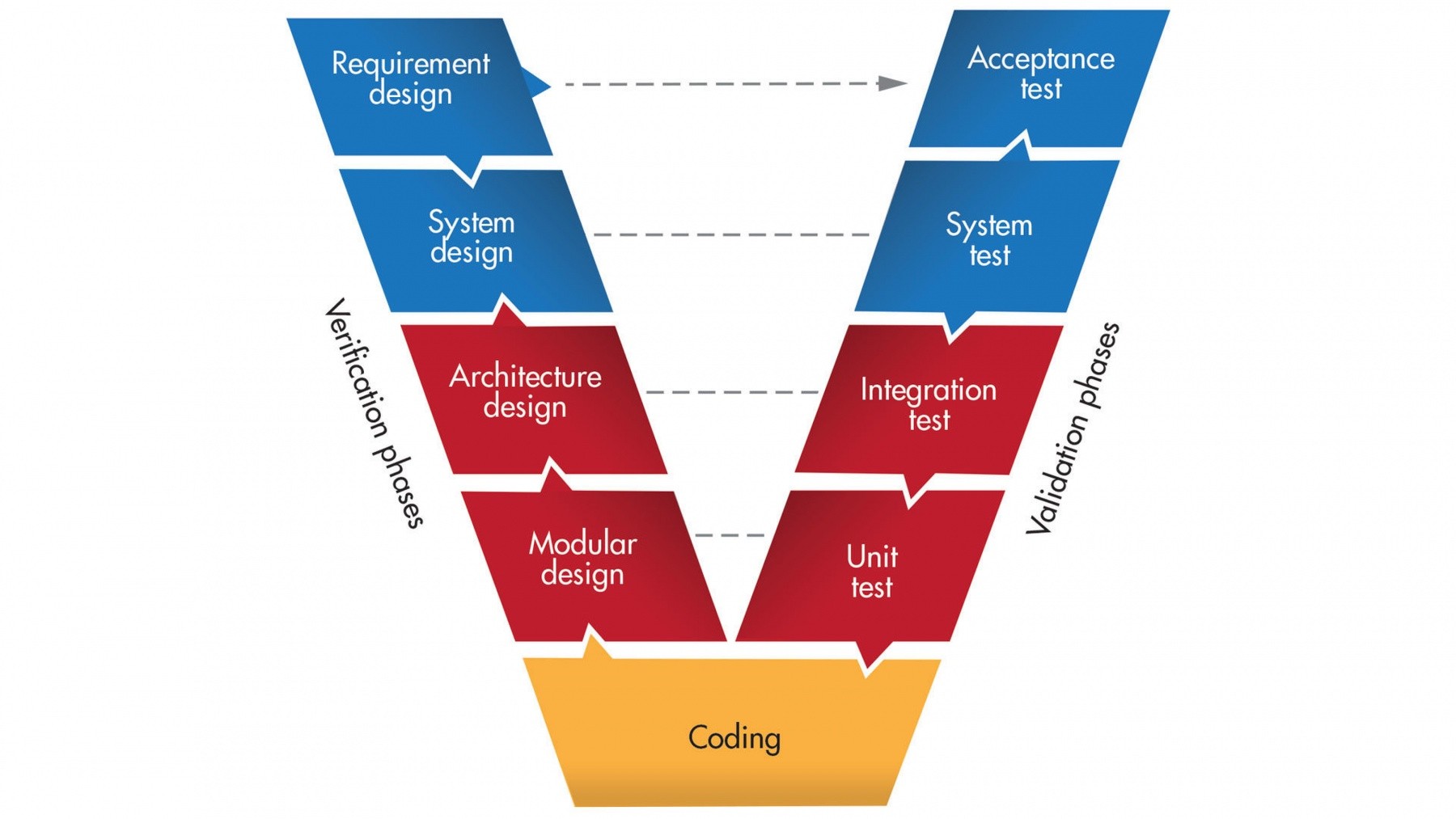

Requirements identify the essential questions that testing must answer to verify that the system provides the desired capabilities. The systems engineering "V" model demonstrates the importance of requirements and their relationship to testing. The V model can also be described as a pyramid, with requirements development and testing forming the base for delivering an effective system. Requirements also establish the level of statistical confidence and precision required for adequate verification of capabilities.

KNOWING YOUR ENVIRONMENT

The test and evaluation community has observed five common challenges to well-developed requirements. These enemies of sound requirements have stymied program development and increased the scope of testing. All acquisition stakeholders at all echelons must understand the risks these enemies carry with them and consider those risks while developing and staffing system requirements. Requirements development is a team effort, so requirements developers are encouraged to involve all stakeholders early in the process.

The following examples are actual requirements taken from recent Army requirements documents. The intent is to foster a practical understanding of the risks in requirements development and their potential impacts. In many cases, these examples were revised as part of the document staffing process.

CHALLENGE #1: NOT OPERATIONALLY FOCUSED

A best practice is to ask, "Do I still want this system if it can't meet this requirement?" Answering "yes" to this question probably means that the requirement is not linked to the operational need the system is intended to fill and should be revised or deleted. As an example of a requirement that is not operationally focused, consider this key performance parameter (KPP) for an artillery round.

KPP: Artillery round is effective against moving targets.

It is certainly possible to fire artillery rounds against a moving target. However, this is not the primary purpose or mission for artillery rounds; rather, it is something usually saved for extreme circumstances. This requirement increases the risk that the system fails the requirement in testing, potentially delaying system fielding. The requirement also drives lengthy and expensive testing since there are many things, such as target type or range to target, that could affect whether the round is effective against moving targets.

Requirements should support a complete, end-to-end operational evaluation of the entire system in support of the mission. It is dangerous to exclude subsystems, such as government-owned radios or sensors, or limit the requirement to specific domains, such as mechanical assessments. Subsystems, as part of the overall system, can impact its performance and reliability. Most systems are used in multiple domains and situations, factors that can affect performance as well. Failing to include these aspects in requirements development increases the risk of an unfavorable system evaluation because it equates to an attempt to exclude potential system failures that exist in reality.

CHALLENGE #2: OVERLY AMBITIOUS REQUIREMENTS

Stretching capabilities is a worthwhile goal, but one to approach with caution when developing requirements. Establishing a requirement that is difficult, perhaps even impossible, to meet can have several impacts on program success. One of them is that testing could fail a system that is providing a valuable capability, because it didn't meet a lofty requirement. A related risk is that overly ambitious requirements increase the evaluation's susceptibility to uncontrolled variables, as the following example from a sensor's requirements document highlights.

KPP: 99.9 percent probability of detection.

This requirement leaves very little room for the system to fail test iterations and still meet the requirement. This quest for perfection makes the evaluation more susceptible to uncontrolled variables, such as user error or something that has little to do with the core capability of the system. That is, the system might fail a test, but the reason could be a Soldier error or inclement weather.

Another problem is requiring too high a level of statistical confidence. Statistical confidence is a scientific parameter used to make sure the test produces enough data to show that the demonstrated results are representative of the system at any time it is used during its life cycle. A statistical confidence of 80 percent is the Army accepted best-practice standard for an adequate test design. Reducing the statistical confidence below 80 percent increases the risk that the demonstrated test results are not representative of the system's actual performance. Statistical confidence can be reduced below 80 percent in specific scenarios, however, when circumstances or resource constraints require the acquisition community to assume more risk.

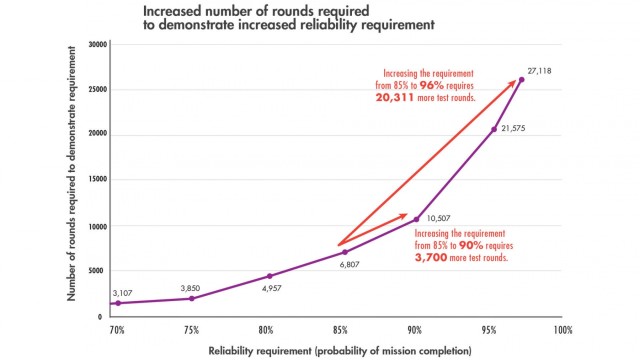

A big problem with ambitious requirements is the necessity to increase the amount of data required to ensure that the system is meeting the requirement with statistical confidence. The graphic "Small increase, big cost" demonstrates the impact that ambitious requirements could have on the design of weapon system reliability testing. The greater the number of rounds needed for an acceptable test, the more costly the test will be, in time and money.

Ambitious requirements have their place, but it is critical that acquisition stakeholders determine whether the potential benefits are worth the additional risk of failure and the necessary resources. The requirements development process should include an analysis showing that the ambitious level of performance is necessary to complete the mission.

CHALLENGE #3: EXTRA OR INFREQUENTLY USED REQUIREMENTS

Extra or infrequently used requirements also can unnecessarily increase the resources required to support system development and testing, as well as the risk that the system fails to meet its requirements. Remember: system requirements drive the system design. Materiel developers may consider design factors that they otherwise would not consider in order to support these extra or infrequently used requirements. These decisions can result in a suboptimal system design as well as the expenditure of research and development funds to develop the required capability.

The design impacts created by extra or infrequently used requirements can increase program costs in both the development and sustainment phases. The test and evaluation community must design a test to verify that the system meets such a requirement in the expected combat environment. The risk increases the chance that the system fails because the conditions surrounding the requirement may be difficult to meet. The example below from an unmanned ground system demonstrates some of these challenges.

KPP: Unmanned system control. The system controller must have the ability to achieve and maintain active and/or passive control of any current Army and Marine Corps battalion and below level unmanned (air or ground) system and/or their respective payloads in less than three minutes.

This KPP requires development of a universal controller that operates with all Army and Marine Corps unmanned air and ground systems. The benefits of a universal controller are obvious: It drives commonality and reduces the number of pieces and parts the unit has to carry and maintain. But the challenges of such a broad requirement are less obvious: It drives a hardware and software solution that is capable of interfacing with numerous unmanned systems, all of which likely have different interface exchange requirements. That increases the risk that the controller cannot interface with one or more unmanned systems, thereby failing the requirement. Additionally, the test and evaluation community must design a test to verify that the controller can control all unmanned systems; such a test could prove to be lengthy and expensive, depending upon the number of interfaces required.

These requirements will work only to the extent that they're carefully considered within the scope of the intended mission, and their feasibility is within the scope of the time, resources and risk of system development. An alternate course could have been to focus the requirement on the most commonly used unmanned systems.

CHALLENGE #4: OVERLY PRECISE REQUIREMENTS

System requirements frequently include a questionable level of precision in their quantitative performance metrics. Stakeholders may want to ensure that the system is effective, hold the contractor accountable for delivering the desired capability or make sure the requirement is testable. While these are valid objectives, we need to exercise caution when including precise metrics.

Few Americans would tell their car dealer that they are looking for a car that gets no less than 30.01 miles per gallon. This level of precision excludes potentially valid materiel solutions, increases the risk that the system will not meet the requirement and will likely increase testing costs.

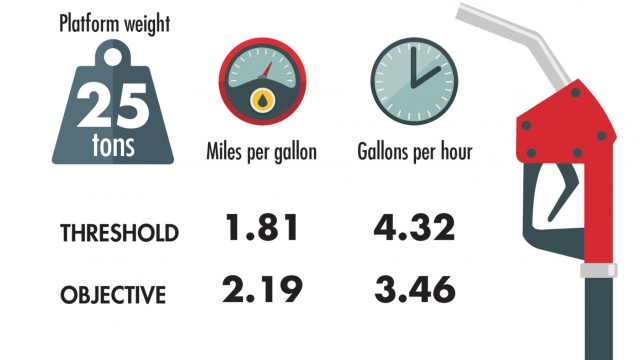

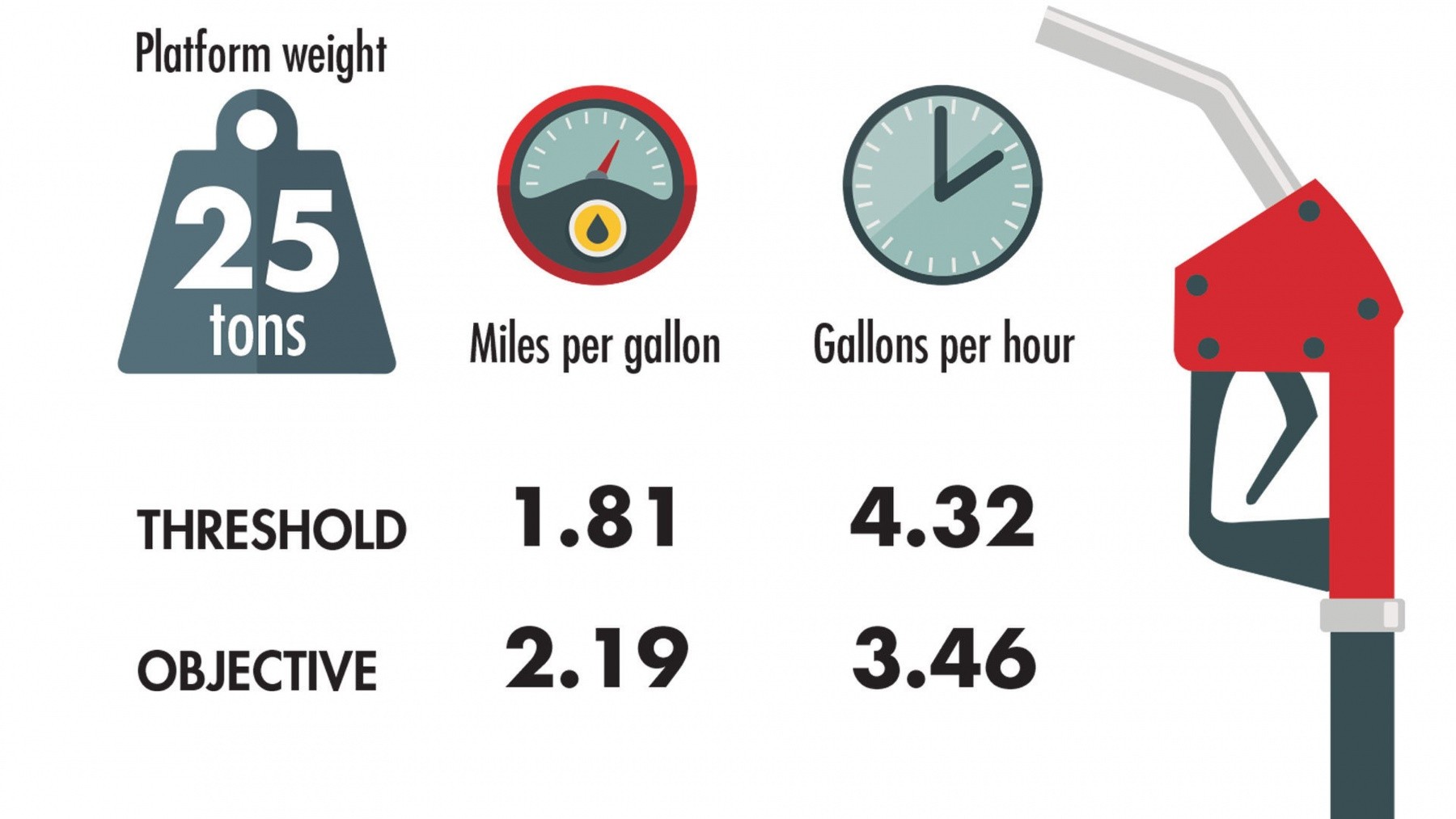

Precise requirements are often too technical and therefore difficult to link directly to the desired operational capability. The graphic "How precise is too precise?" is an example of these challenges from a combat vehicle program. The vehicle is required to demonstrate a fuel consumption rate to the one-hundredth of a mile per gallon and the one-hundredth of a gallon per hour at 25 tons. The challenge is that this requirement drives a lengthy and expensive test program to verify performance down to the one-hundredth level with statistical confidence.

At times, requirements should include precise quantitative metrics. The goal of the requirements development process should be ensuring that the requirements represent the bottom-line standards of performance that the unit needs. Is the Army commander going to say that this vehicle doesn't adequately support the mission if it only gets 1.80 miles per gallon at 25 tons? Perhaps a more effective requirement is how long the vehicle must be able to operate before logistical resupply.

CHALLENGE #5: OVERLOOKING SUPPORTABILITY

System supportability is a major contributor to operation and sustainment costs and a major component of a system's suitability. Supportability and sustainment considerations must be built into the engineering process at the start to streamline development and minimize future risks. If requirements development does not take into account system supportability, testing can demonstrate that the system is not supportable or sustainable.

The result could be to significantly increase program cost, because the materiel developer will have to develop solutions to supportability problems later in the engineering process. The requirements development process must consider the maintenance and repair requirements and conditions to ensure that those capabilities exist when the system is fielded.

CONCLUSION

The primary purpose of test and evaluation is to provide decision-makers the essential information needed to determine a system's readiness to proceed to the next program milestone or fielding. Requirements need to lay a foundation that supports achieving this goal.

Requirements that are not focused on the desired operational capability can delay system fielding and increase test costs. They can add unnecessary testing as the test and evaluation community tries to confirm that a system meets a requirement that is not critical to the system's desired capability. Poorly developed requirements can also increase the scope of existing tests.

Operationally linked requirements ensure that acquisition stakeholders are asking the correct questions and are focusing efforts on providing the desired capability that will help our Soldiers on the battlefield.

For more information, go to www.atec.army.mil and www.benning.army.mil/MCoE/CDID.

JOSHUA R. BARKER is the Armored Brigade Combat Team Division chief at the U.S. Army Test and Evaluation Command, Aberdeen Proving Ground, Maryland. He has nearly 17 years' experience designing and executing test and evaluation strategies for Army combat vehicles and networked systems. He has a B.S. in mathematics from Bethany College. He is an Army Acquisition Corps member and is Level III certified in test and evaluation.

DON SANDO is director of the Maneuver Capabilities Development and Integration Directorate, U.S. Army Maneuver Center of Excellence, Fort Benning, Georgia. He was selected to the Senior Executive Service in February 2008 after retiring from the Army as a colonel with 26 years of active service. He has an M.S. in operations research from the U.S. Air Force Institute of Technology, an M.S. in strategic studies from the U.S. Army War College and a B.S. from the United States Military Academy at West Point. He is a member of the Association of the United States Army.

This article is published in the Winter 2020 issue of Army AL&T magazine.

Related Links:

Assistant Secretary of the Army for Acquisition, Logistics, and Technology

U.S. Army Fort Benning and The Maneuver Center of Excellence

Social Sharing